In the first of three exclusive articles, Dennis Marcus of DIL simulation specialist Cruden provides an overview of the relative importance of simulator visuals, dependent on the type of testing being undertaken

All driver-in-the-loop (DIL) simulation content starts with a 3D virtual environment, but the nature, extent and components of that environment will differ depending on the simulation work that needs to be done.

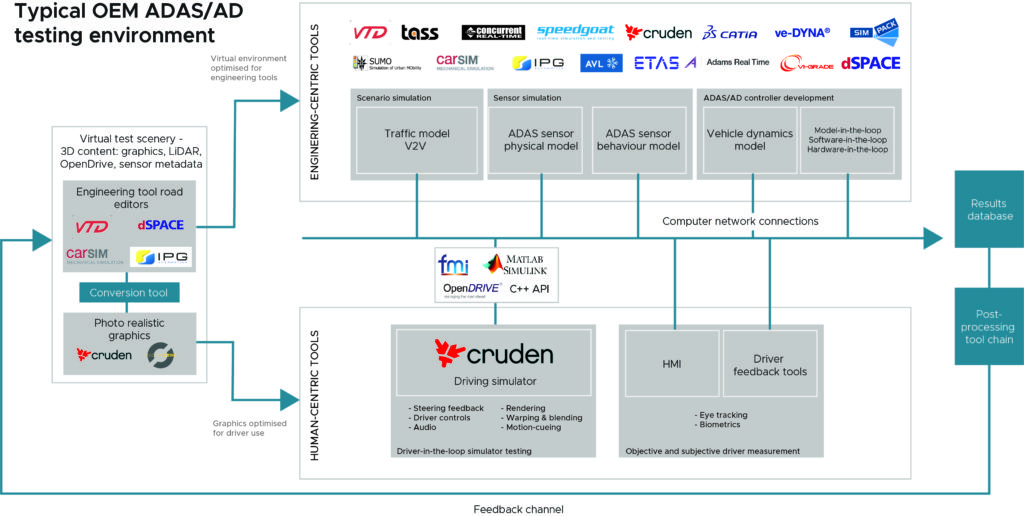

The schematic below identifies two different simulation paths – engineering-centric and human-centric – as defined by their different aims and tools. This distinction is relevant to discussions about 3D content requirements.

The environment for testing vehicle sensors – and how they collaborate in sensor simulation or sensor fusion – requires scenario editors and tools that enable engineers to quickly devise road layouts to simulate new use cases. This engineering-centric approach is represented in the upper level of the schematic of the ADAS/AD virtual test environment.

Here, the visuals need not be photo-realistic, just sufficient for engineering use. The 3D element is presented as part of the simulation only when required.

If the sensor for which you’re building content is the human eye, however, then you need more detail, and a different type of detail in order to create the most realistic view: one that will impress and immerse a human driver in a DIL simulator. This is represented by the lower level of the schematic.

At Cruden, our 3D artists create highly detailed, graphically rich, human-centric 3D content for driving simulators. For engineers to get the most out of sessions with a large number of regular drivers in a driving simulator, these non-expert participants must be presented with a realistic environment. Without it, they will never get fully immersed and will not behave as if they’re driving in a real car. Here, the graphical quality of the road or track is crucial to the value of the driver feedback data being collected.

Of course, the driving simulation will still require sensors that provide an object list and situational awareness to challenge the ADAS controller to make its decision and to present the human with a realistic scenario – whether there is a car in front, or what speed it is traveling, for example. But with the focus on the driver, the sensors do not need to be fully modeled.

Cruden believes that in 95% of cases where a simulator is used for DIL testing for ADAS and AD experiments, ground truth sensors are sufficient. Yet it sees many OEMs and Tier 1 suppliers using advanced, physics-based sensor models in their driving simulators while developing and validating ADAS controllers.

This way of working can lead to hours of unnecessary driving in the simulator, waiting to evaluate the impact of a false positive on the driver. We read about the billions of kilometers of testing required for AD but developing those sensor systems and AD controllers can be done using offline simulation.

By contrast, human testing should be focused on the interaction between the driver and the automated systems in the car. Nevertheless, those billions of kilometers in offline simulation are important in providing the input for the DIL testing scenarios.

Care must also be taken when talking to third-party content suppliers about modeling graphics. Not everyone can make a model that synchronizes with information such as the OpenDRIVE road definition, point cloud or mesh.

For a DIL simulator experience to work, the different content layers have to match. The tire models interact with the detailed point cloud definition of the road, while the graphics models of the tires should be on the road surface and not appear either above or below the road. It’s not easy. Very few 3D graphics design outfits can do it properly for use in a driving simulator used for automotive engineering.

Just as a vehicle model uses the road definition and interaction with the tire model to calculate the steering torque, in ADAS controller testing vehicle models interact with other elements of the virtual environment. This requires a deep understanding of OpenDRIVE layers, the logical road definition that has become the standard in automotive simulation.